联系我们

RK3588内置6TOPS算力NPU,部署YOLOv8可实现30~50FPS实时目标检测(yolov8n模型),全程通过瑞芯微RKNN Toolkit2工具链完成模型转换与板端推理,以下是从环境搭建、模型转换到板端部署的完整实操步骤。

一、准备工作(硬件+软件)

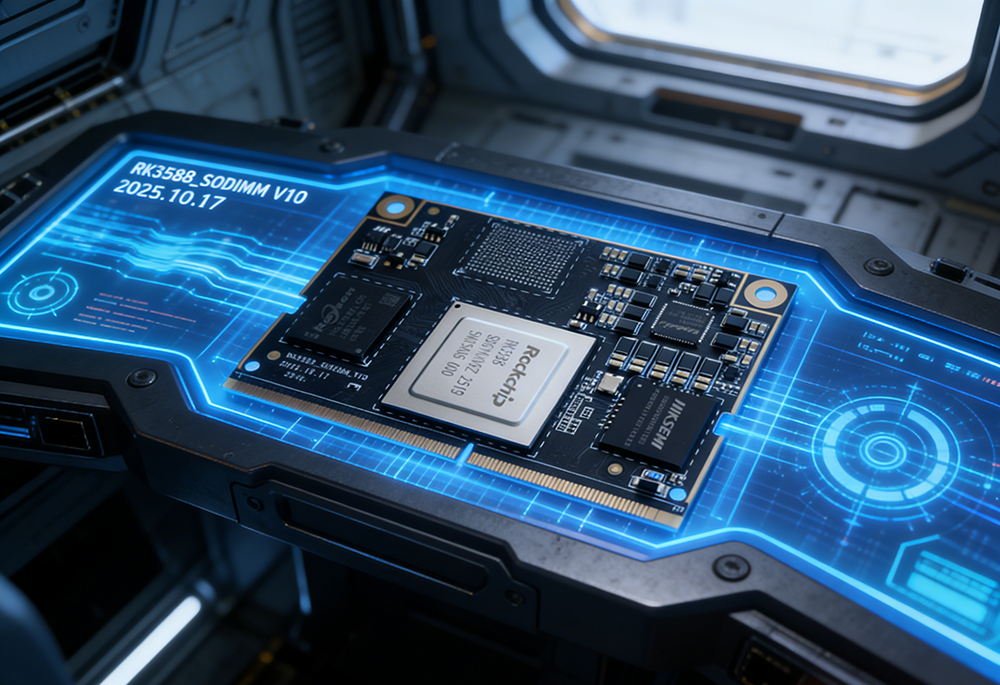

1. 硬件清单

RK3588开发板(万物纵横DM3588、香橙派5/5B、Radxa Rock 5B、野火RK3588等)

电脑(Ubuntu 20.04/22.04 x86_64,模型转换仅支持Linux)

USB摄像头/MIPI摄像头、网线、SD卡(≥16GB)

开发板电源适配器

2. 软件版本(2026最新稳定版)

RKNN Toolkit2:v2.3.2(PC端模型转换)

RKNN Toolkit2 Lite:v2.3.2(板端推理)

YOLOv8:ultralytics>=8.0.200

Python:PC端3.10,板端3.8/3.10

开发板系统:Ubuntu 20.04/22.04(Debian 11亦可)

二、PC端环境搭建(模型转换)

1. 安装Miniconda(隔离环境)

wget https://repo.anaconda.com/miniconda/Miniconda3-latest-Linux-x86_64.sh

bash Miniconda3-latest-Linux-x86_64.sh -b

source ~/.bashrc

# 创建RK3588专用环境

conda create -n rk3588 python=3.10 -y

conda activate rk3588

2. 安装RKNN Toolkit2

# 下载官方工具包

git clone https://github.com/rockchip-linux/rknn-toolkit2.git

cd rknn-toolkit2/packages/x86_64

# 安装依赖

pip install -r requirements_cp310-2.3.2.txt

# 安装RKNN主工具

pip install rknn_toolkit2-2.3.2-cp310-cp310-manylinux_2_17_x86_64.whl

# 验证安装

python -c "from rknn.api import RKNN; print(RKNN.__version__)"

3. 安装YOLOv8

pip install ultralytics opencv-python onnx onnxsim

三、YOLOv8模型转换(PyTorch→ONNX→RKNN)

1. 导出ONNX模型(简化+优化)

from ultralytics import YOLO

# 加载预训练/自定义模型

model = YOLO("yolov8n.pt") # 可替换为yolov8s/m/l等

# 导出ONNX(必须simplify,适配RKNN)

model.export(

format="onnx",

imgsz=640,

simplify=True,

opset=12,

batch=1

)

# 输出:yolov8n.onnx

2. ONNX→RKNN转换(FP16/INT8量化)

创建convert2rknn.py:

from rknn.api import RKNN

# 配置

RKNN_MODEL = "yolov8n_rk3588.rknn"

ONNX_MODEL = "yolov8n.onnx"

TARGET = "rk3588"

# 初始化RKNN

rknn = RKNN(verbose=True)

# 配置NPU参数

rknn.config(

mean_values=[[0, 0, 0]],

std_values=[[255, 255, 255]],

target_platform=TARGET,

quantized_dtype="fp16" # 可选int8(需校准集)

)

# 加载ONNX

ret = rknn.load_onnx(model=ONNX_MODEL)

# 构建模型

ret = rknn.build(do_quantization=False) # int8设为True

# 导出RKNN

ret = rknn.export_rknn(RKNN_MODEL)

# 释放

rknn.release()

print("转换完成!生成:", RKNN_MODEL)

运行转换:

python convert2rknn.py

量化选择:

FP16:精度损失<1%,无需校准集,速度快

INT8:速度提升30%,需100~200张校准图,精度损失<3%

四、RK3588开发板环境部署

1. 系统烧录与基础配置

下载官方Ubuntu镜像,用Etcher烧录至SD卡

开机、配置网络、开启SSH

sudo apt update && sudo apt install openssh-server -y

sudo systemctl enable ssh

2. 安装RKNN Toolkit2 Lite(板端推理)

# 新建工作目录

mkdir -p ~/yolov8_rk3588 && cd ~/yolov8_rk3588

# 从PC端传输lite包与RKNN模型

# PC端执行:

scp yolov8n_rk3588.rknn ubuntu@[开发板IP]:~/yolov8_rk3588/

scp ~/rknn-toolkit2/packages/aarch64/rknn_toolkit_lite2-2.3.2-cp310-cp310-linux_aarch64.whl ubuntu@[开发板IP]:~/yolov8_rk3588/

# 板端安装依赖

sudo apt install -y python3-pip python3-opencv libopencv-dev

# 安装Lite2

pip install rknn_toolkit_lite2-2.3.2-cp310-cp310-linux_aarch64.whl

五、板端YOLOv8推理代码(Python)

创建yolov8_rknn_infer.py:

import cv2

import numpy as np

from rknnlite.api import RKNNLite

# 配置

RKNN_MODEL = "yolov8n_rk3588.rknn"

IMG_SIZE = 640

CONF_THRES = 0.25

IOU_THRES = 0.45

# 初始化RKNN Lite

rknn = RKNNLite()

ret = rknn.load_rknn(RKNN_MODEL)

ret = rknn.init_runtime() # 自动调用NPU

# 预处理(letterbox+归一化)

def preprocess(img):

h, w = img.shape[:2]

scale = min(IMG_SIZE/h, IMG_SIZE/w)

new_w, new_h = int(w*scale), int(h*scale)

img_resize = cv2.resize(img, (new_w, new_h))

pad_w, pad_h = IMG_SIZE-new_w, IMG_SIZE-new_h

img_pad = cv2.copyMakeBorder(img_resize, pad_h//2, pad_h-pad_h//2,

pad_w//2, pad_w-pad_w//2, cv2.BORDER_CONSTANT, (114,114,114))

img_pad = img_pad.astype(np.float32) / 255.0

img_pad = img_pad.transpose(2, 0, 1) # HWC→CHW

return np.expand_dims(img_pad, axis=0), (scale, pad_w//2, pad_h//2)

# 后处理(NMS+解码)

def postprocess(output, scale, pad_w, pad_h):

pred = np.squeeze(output[0])

boxes = pred[:, :4]

scores = pred[:, 4]

classes = np.argmax(pred[:, 5:], axis=1)

# 置信度过滤

mask = scores > CONF_THRES

boxes, scores, classes = boxes[mask], scores[mask], classes[mask]

# 坐标还原

boxes[:, 0] = (boxes[:, 0] pad_w) / scale

boxes[:, 1] = (boxes[:, 1] pad_h) / scale

boxes[:, 2] = (boxes[:, 2] pad_w) / scale

boxes[:, 3] = (boxes[:, 3] pad_h) / scale

# NMS

indices = cv2.dnn.NMSBoxes(boxes.tolist(), scores.tolist(), CONF_THRES, IOU_THRES)

if len(indices) > 0:

indices = indices.flatten()

return boxes[indices], scores[indices], classes[indices]

return [], [], []

# 推理测试

if __name__ == "__main__":

# 读取图片

img = cv2.imread("test.jpg")

# 预处理

img_tensor, (scale, pad_w, pad_h) = preprocess(img)

# NPU推理

outputs = rknn.inference(inputs=[img_tensor])

# 后处理

boxes, scores, classes = postprocess(outputs, scale, pad_w, pad_h)

# 画框

for box, score, cls in zip(boxes, scores, classes):

x1, y1, x2, y2 = map(int, box)

cv2.rectangle(img, (x1,y1), (x2,y2), (0,255,0), 2)

cv2.putText(img, f"cls:{cls} {score:.2f}",

(x1,y1-10), cv2.FONT_HERSHEY_SIMPLEX, 0.5, (0,255,0), 2)

# 保存结果

cv2.imwrite("result.jpg", img)

print("推理完成,保存至result.jpg")

rknn.release()

六、运行与性能测试

# 板端执行

python yolov8_rknn_infer.py

# 查看FPS(循环推理)

python -m timeit -n 100 "import cv2; from yolov8_rknn_infer import *; img=cv2.imread('test.jpg'); rknn.inference(inputs=[preprocess(img)[0]])"

yolov8n:RK3588 NPU推理约40~50FPS

yolov8s:约25~35FPS

yolov8m:约15~20FPS

七、常见问题与优化

1. 模型转换失败

确保ONNX已simplify=True,opset=12

降低模型尺寸(如640→480)

2. 板端推理慢

启用INT8量化

选择core_mask=0xF(4核NPU并行)

3. 检测不准

检查预处理/后处理坐标转换

调整CONF_THRES与IOU_THRES

4. 摄像头实时推理

替换图片读取为cv2.VideoCapture(0)

加入多线程(预处理/推理/显示分离)

八、进阶:C++部署(工业级)

如需更低延迟、更高稳定性,可使用RKNN C++ API:

1. 安装aarch64交叉编译工具链

2. 基于rknn-toolkit2/examples/yolov8/示例编译

3. 支持多路视频流、多模型并行、硬件编解码

需求留言:

需求留言: